Post provided by Jeremy D. Pustilnik and Genevieve S. Rios

Natural history museums around the world collectively hold over one billion specimens in their collections, from animal skins and fossils to pressed plants, minerals, and cultural heritage artifacts. Only a small fraction of these objects is ever placed on public display, while most remain in collection cabinets where they are studied by scientists, but rarely seen by broader audiences. For most researchers who would like to study specimens, it is often necessary to travel to distant museums or to request physical loans, which can be costly and time-consuming, and can pose risks to fragile materials during shipping. Even as collections grow, these logistical barriers continue to limit access to the scientific information contained within them.

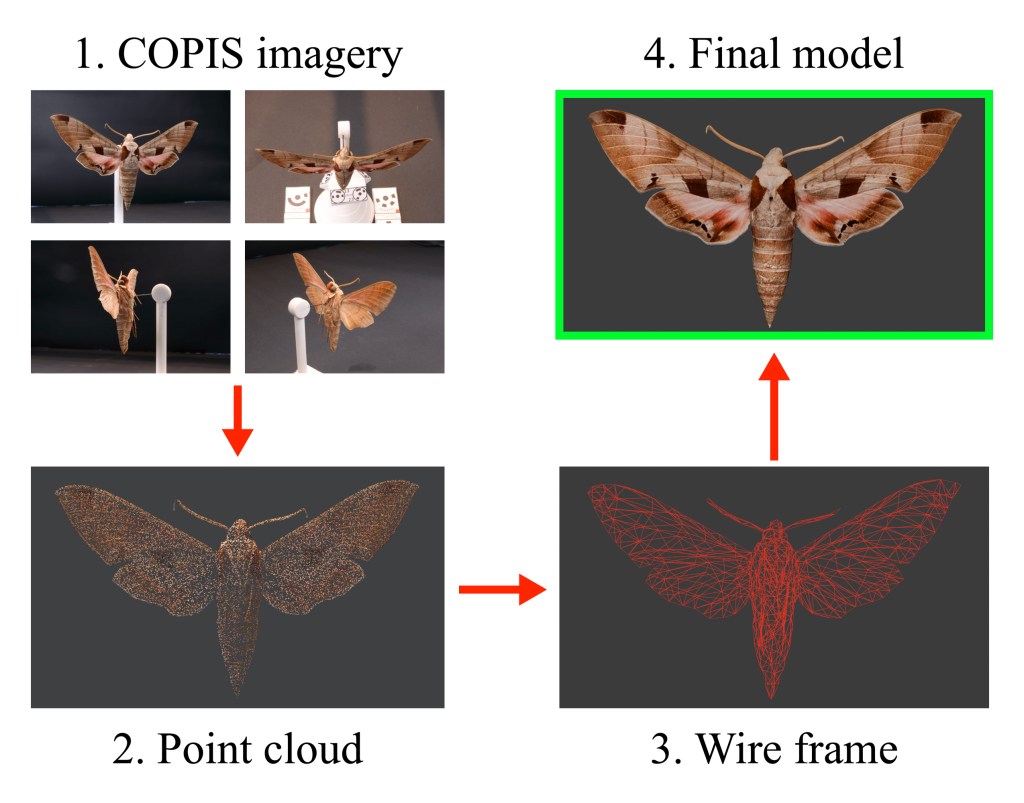

However, advances in 3D modelling are beginning to remedy this. High-resolution digital models of natural history specimens can be created in true scale and color, allowing researchers to share and instantly access specimens remotely from anywhere in the world for examination and use in computational analyses. In some cases, digital models even allow scientists to measure features that are difficult or impossible to obtain from physical specimens, such as surface area or volume. To help make large-scale 3D digitization possible, we developed COPIS, the Computer Operated Photogrammetric Imaging System. COPIS combines robotics and photogrammetry in a single high-throughput, open-source, and customizable platform for generating 3D models of natural history specimens.

At its core, COPIS functions like a CNC (Computer Numerical Control) machine: multiple cameras move around targeted objects within an imaging chamber, capturing photographs from many precisely controlled viewpoints. These images can then be imported into photogrammetry software such as Agisoft Metashape, Reality Scan, or Alice Vision to generate 3D models. Photogrammetry works by identifying shared features across overlapping photographs and calculating how those features shift between images; this information is then used to estimate camera positions and reconstruct an object’s geometry as a dense mesh of tiny triangles that together represent the specimen’s surface.

What makes COPIS different from previous approaches is its flexibility and level of automation. Instead of requiring researchers to manually photograph objects from multiple angles, or relying on large arrays of fixed cameras, COPIS allows cameras to move around specimens along programmable paths. This ensures precise and consistent coverage while dramatically reducing the time required to capture images and the number of cameras needed. COPIS was also designed to use consumer-grade cameras and parts that can be acquired relatively cheaply, or even 3D printed in-house. Additionally, it is no longer necessary to use a turntable (imagine the spinning plate in your microwave oven) for acquiring images. Turntables present challenges for imaging irregularly-shaped objects such as those that are long and thin: parts of those objects would otherwise be out-of-frame, or their images would be blurry because they are outside of the focal distance of the camera.

Despite decades of digitization efforts, the number of specimens entering collections continues to grow faster than they can be digitized, and this widening “digitization gap” limits the accessibility and scientific use of many collections. COPIS helps address this challenge by supporting a wide range of imaging workflows: the system can image individual specimens of variable shapes and sizes, multiple specimens simultaneously, entire collection drawers, specimen labels such as those attached to pinned insects, fluid-preserved material using specialized tools like the COPIS vial scanner, and much more!

As more institutions adopt COPIS, we hope the community will continue to collectively expand and refine its open-source architecture. The system was intentionally designed with the future in mind, and it is our hope that updates could integrate other modules such as LiDAR scanning, ultraviolet imaging, and multispectral imaging. With contributions from researchers, engineers, collection managers, and others, COPIS can evolve to meet the imaging needs of museums worldwide and provide a scalable pathway toward a more accessible and digitally connected future for natural history collections.

View and download freely available 3D models of Yale Peabody Museum specimens produced with COPIS at sketchfab.com/yalepeabodymuseum/models

Find the schematics to build your own COPIS and an overview of the system at copis3d.org

Read the full paper here.