Post provided by Michael Smith

Tracking bee movement is anything but an easy task. Electronic tags are often too cumbersome and extensive electronic systems such as radars are costly to deploy. There is a need for a low-cost, low-impact tool, with high spatial resolution for tracking bees, to investigate how far they forage. In this blog post, Michael Smith discusses the development of retroreflective tags for real-time tracking of foraging bees, as published in their Methods in Ecology and Evolution article ‘A method for low-cost, low-impact insect tracking using retroreflective tags’.

History of tracking bees

Dave Goulson’s “A Sting in the Tale” is a wonderful account of his work studying Bumblebees; full of questions and mysteries and the efforts that have gone into solving them. In one chapter he describes scientists trying to estimate how far bumblebees travel to forage and how important this is for conservation efforts.

“The most obvious approach is to mark the bees in a nest,…and then look to see where the marked bees are foraging. This has been tried a number of times …but no matter how many bees they have marked, and how hard they have then looked for them on flowers, the scientists have invariably seen very few.”

Dave Goulson,”A Sting in the Tale”

Goulson described the alternative: The harmonic radar system developed by Juliet Osborne and Joe Riley, and it seemed like the problem was solved. But the expense and complexity of the radar system meant that another method might be useful.

Bees and retroreflectors

The obvious solution to the tracking problem appeared while I was cycling home at night from work. With my headlight set to “blink” I noticed how even very distant road signs would flash on/off amongst the clutter of car headlights and shops. Could we do something similar to track bees? It turns out retroreflectors have been tried in the past to find insects.

First, I thought of using a UV filter to reduce the ‘clutter’ from the background. With assistance from Jonny Sutton (Sheffield Hallam University), we realised that this approach worked really well with just visible light. The idea was that bees could be tagged as before, but this system could then be deployed to find them again over a large area, making re-observation far more likely (and potentially automated).

Overcoming logistics

The main problem we encountered in the early stages of development was being able to see the bee from a ground-based camera. Foliage often obscures the bee and the flat tag we initially used, meaning it was difficult to get a clear view from the side. This led to a less-fruitful series of experiments trying to get the tracking system into the air to allow it to look down at the bee. For this, we tried using a UAV (drone) to lift it, a tethered balloon (terrifying, if windy), and a 10m high segmented temporary (radio-)mast (with the potential to fall on someone). All were awkward and complicated.

Another problem was finding a suitable field site. This is an ongoing challenge for us and many other researchers. For the re-observation study in particular the project needed access to a large area of land.

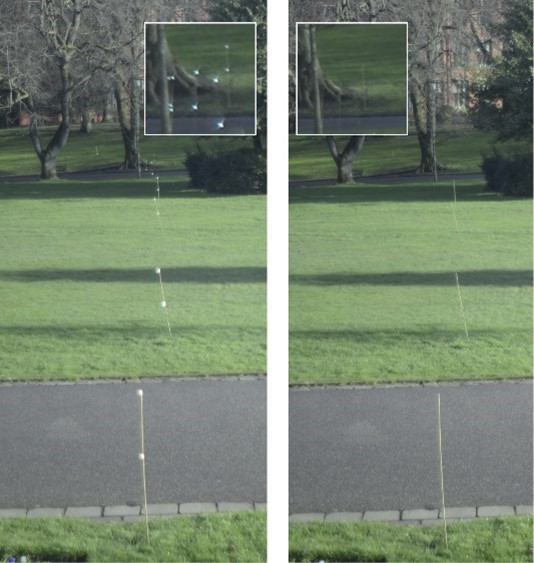

False positives were also a problem, which were mostly encountered when taking photos from nearer the ground. If the system is going to support re-observation then we need to be sure the thing it’s detected is really the tagged bee. Over the winter of 2019 we collected a more systematic set of images of the tags in some relatively challenging environments: a suburban road with the clutter of cars, buildings and trees and a nearby park. This gave us a good set of true and false positives we could use to carefully design the algorithm to mitigate each source of noise.

There are three things that we can look for, to determine if we’ve really found the tagged bee, or some other clutter.

- Is the bright dot only in the flash photo (and not in the no-flash photo)?

- Does it move between photos?

- Is it a tiny dot, or is it a larger blob/shape?

We can also consider:

- Do we often see false positives in this part of the image?

Our algorithm uses these features to remove false positives. At its simplest the system just needs to subtract the no-flash photo from the flash photo to create a ‘difference’ photo. But it can improve on this by:

- Dilating (this means expanding bright dots in an image) the no-flash photo: This means a bright white butterfly that has moved a little between photos, will still ‘cancel-out’ the butterfly in the flash photo.

- We take pairs of flash/no-flash photos regularly and compute these difference-images for each pair. So, another trick is to subtract the maximum of the last few difference-images from the current one, to remove reflective objects that haven’t moved (such as litter or stationary cars).

- The final problem is the flash lighting up nearby dust, and distant moving cars etc. These sorts of objects typically are bigger in the image than the single bright dot of the bee, so a few simple heuristics around the size of the blob or dot are sufficient to threshold which might be the real bee, and which are not.

Left running, the system can monitor the bees moving around a field site for hours or days.

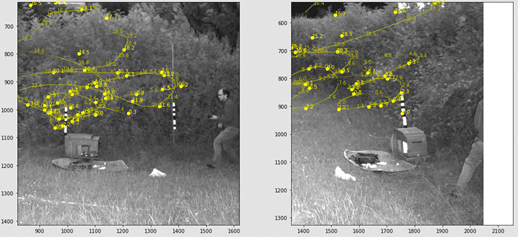

Last year, thanks to a second grant from the Eva Crane Trust, and support from Professor Natalie de Ibarra and Katy Chapman, I put together four tracking systems to deploy around a nest at a field site. By combining the photos from the four systems (using a bit of probabilistic modelling) we could reconstruct in 3D, the learning flight of the tagged bees.

Future work

Going forward, some of the remnant bugs/issues need to be fixed so that other interested research groups can use the system. One interesting future application of this approach would be to follow the life of a solitary bee, using lots of tracking systems across its range. Watch this space!

To read the full Methods in Ecology and Evolution article, click on the following link: A method for low-cost, low-impact insect tracking using retroreflective tags.