Throughout March, we are featuring articles shortlisted for the 2025 Robert May Prize. The Robert May Prize is awarded by the British Ecological Society each year for the best paper in Methods in Ecology and Evolution written by an early career author. Jenna Kline’s article ‘Studying collective animal behaviour with drones and computer vision‘ is one of those shortlisted for the award.

About the paper

What is your shortlisted paper about, and what are you seeking to answer with your research?

Our paper provides a comprehensive overview and field guide for researchers working at the intersection of computer vision, aerial robotics, and behavioral ecology. This paper started as a literature review when I wanted to learn how to automate drone missions for wildlife behavior studies using AI, and discovered it was a wide-open area for research. We synthesized the papers I found during my review and provided a summary of state-of-the-art methodologies for AI-driven drone wildlife behavior studies. To bridge the various disciplines, we map specific computational tasks to their ecological applications and illustrate computer vision pipelines that infer collective animal behavior from drone imagery.

Were you surprised by anything when working on it? Did you have any challenges to overcome?

The biggest challenge was synthesizing research across computer science, robotics, ecology, and environmental monitoring, especially since these fields often use the same terms to mean very different things. For example, “tracking” in computer vision refers to following objects across video frames, while in behavioral ecology it typically means following animal movements over time and space. We also found that reported model accuracy varies widely by computer vision task, species, habitat, and evaluation metrics, making meaningful comparisons between studies difficult. These gaps highlighted a real opportunity for cross-disciplinary collaboration in AI-driven animal ecology.

What is the next step in this field going to be?

Smart sensors, powered by edge AI, will serve as intelligent assistants in the field, not replacing human expertise, but augmenting and expanding it. Autonomous drones will greatly expand our capabilities to scale and standardize drone studies while reducing disturbance and improving data quality. Looking further ahead, I believe smart multimodal sensing networks will be the future of long-term ecosystem monitoring. Autonomous drones will serve not as standalone tools, but as dynamic components of a larger environmental sensing system, including camera traps, bioacoustics sensors, GPS tags, satellites, and edge compute devices.

What are the broader impacts or implications of your research for policy or practice?

Wildlife behaviors can tell us a great deal about overall ecosystem health, such as how animals respond to environmental changes, and whether conservation policies and management plans are having their intended effect. By providing guidelines for AI-driven animal ecology drone studies adaptable to various species and habitats, our work aims to help researchers collect high-quality behavior data while minimizing disruption to ecosystems. Standardizing these methods can make behavioral monitoring more accessible and repeatable, ultimately supporting evidence-based conservation and biodiversity protection.

About the author

How did you get involved in ecology?

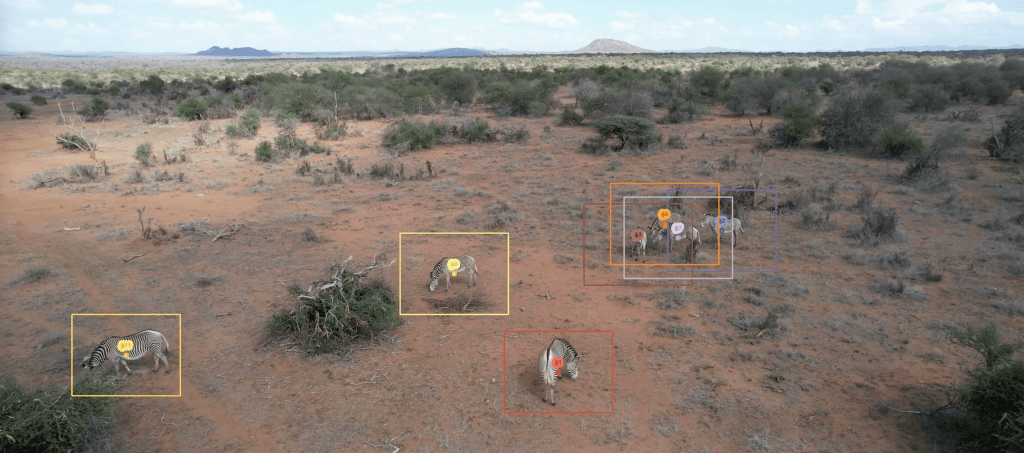

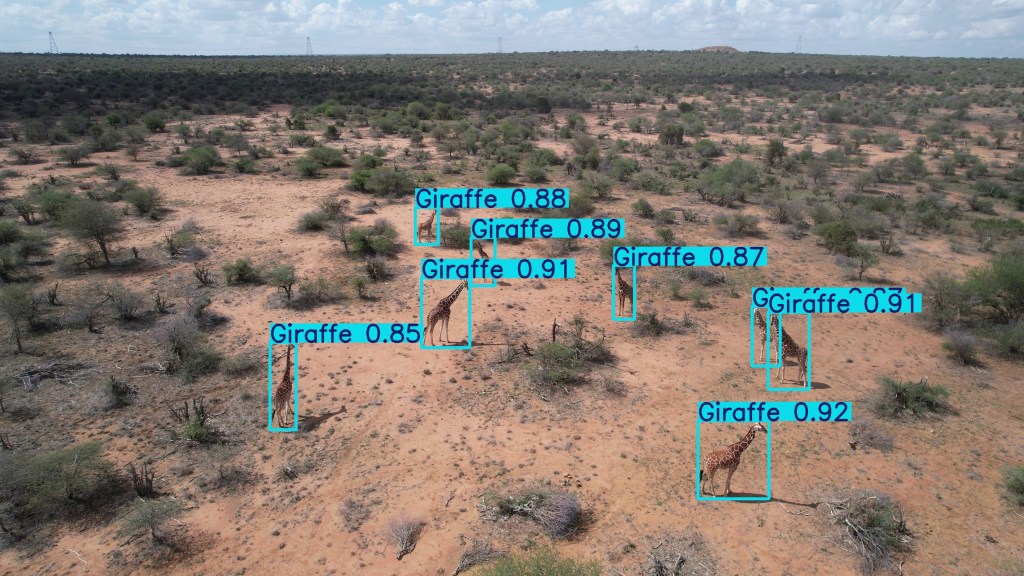

My first introduction to ecology was through a field ecology and AI course with the Imageomics Institute during my graduate studies at The Ohio State University. Through this course, I traveled to Mpala Research Centre in Kenya to study the social dynamics of zebras and giraffes with Dr. Tanya Berger-Wolf and Dr. Daniel Rubenstein. I piloted drones to collect video behavior data, then led the team in annotating the data to train a computer vision model. This produced the Kenyan Animal Behavior Recognition (KABR) dataset and sparked my interest in developing AI-driven systems for ecology and conservation.

What is your current position?

I am a PhD candidate in Computer Science and Engineering at The Ohio State University.

Have you continued the research your paper is about?

Yes! I’ve continued developing autonomous drone missions that integrate edge-based computer vision models to improve drone data collection for animal behavior studies. I recently tested the system in the field with my wonderful colleagues from WildDrone at Ol Pejeta Conservancy in Kenya. I’ve also expanded into edge AI for multimodal sensor networks, studying distributed systems that combine drones, camera traps, bioacoustic sensors, and citizen science platforms for environmental monitoring.

What one piece of advice would you give to someone in your field?

Seek out collaborators outside your discipline. Some of the most exciting research is happening at the intersection of computer science and animal ecology, but these fields rarely overlap. Building interdisciplinary teams takes effort and time, including learning each other’s terminology, methods, and priorities, but I think it’s where the most impactful (and fun!) work emerges.