Post provided by Hiroshi Hakoyama.

Rethinking extinction probability as a conservation endpoint

Thinking about conservation in terms of species extinction as an endpoint underpins how priorities are set in the IUCN Red List and CITES. At their core, these frameworks are about deciding which populations or taxa should be prioritised for conservation effort. Yet Population Viability Analysis (PVA), which aims to quantify extinction probability itself, has long been debated. A recurring concern has been that, for short and noisy ecological time series, uncertainty may be so large that confidence intervals for extinction probability span nearly the entire 0-1 range, making the results practically useless.

This is not just a technical issue. If extinction probability cannot be estimated with meaningful precision, then the whole framework of using species extinction as an endpoint for conservation prioritisation is called into question. In this paper, I returned to that problem from first principles.

The w-z method and theoretical advances in estimation

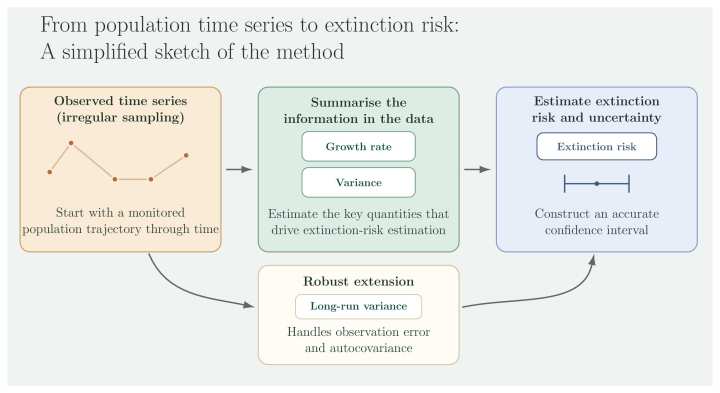

The model I focused on is the drift-Wiener process, one of the most basic and most extensively studied stochastic models of extinction dynamics. Rather than working directly with the original parameters, I focused on two transformed quantities, w and z, which determine extinction probability. This makes it possible to handle the distribution of the maximum-likelihood estimators analytically and to derive a method that provides accurate confidence intervals for extinction probability. This is the w-z method.

The main result gives a clear answer to the long-running debate over PVA. The key point is not simply that precision depends on sample size. In this setting, the dependence on effect size, that is, on how far the true extinction probability lies from the point of maximum uncertainty, can be characterised analytically, and it turns out to matter a great deal: when the true extinction probability is sufficiently low or sufficiently high, reliable inference is possible even from time series whose length is only around 10-20% of the relevant time horizon. At least under the drift-Wiener process, the view that PVA is always uninformative when data are limited is therefore not generally correct.

Extending the framework to real world data: the OEAR approach

The paper also introduces an extension for more realistic data situations: the observation-error-and-autocovariance-robust estimator, or OEAR. Ecological data are rarely ideal. Observation error is common, and time series often contain short-run autocorrelation. In OEAR, the naive ML estimate of the diffusion term is replaced by a long-run-variance-based estimator, using HAC methods with AR(1) pre-whitening and a Bartlett kernel. This gives a more robust approach in the presence of additive observation error and short-run dependence.

An important point, however, is that OEAR is not simply a superior replacement for naive ML. Which method performs better depends on the amount of data, the magnitude of observation error, and the nature of the population dynamics itself. The two are best seen not as competing methods, but as complementary estimators, with naive ML as the baseline that OEAR is designed to supplement. OEAR also has a practical advantage: it does not require the heavy machinery of state-space modelling.

The paper does not stop with the idealised baseline model. I also carried out analytical and numerical sensitivity analyses for observation error, short-run dependence, coloured noise, weak density dependence, and CPUE nonlinearity. One of the strengths of the study is that its main conclusions do not depend on a very narrow set of assumptions.

Empirical application: the case of the Japanese eel

As an empirical application, I analysed two national Japanese eel (Anguilla japonica) harvest time series covering 1957 to 2020. The results were striking. Extinction probabilities under IUCN Criterion E remained far below the thresholds for threatened categories, even when confidence intervals were taken into account. This is in clear contrast to the fact that Japanese eel is currently classified as Endangered under Criterion A.

That discrepancy is also consistent with a theoretical result developed later in the paper. Under the drift-Wiener process, for sufficiently large populations, the decline-based part of Criterion A systematically overestimates the extinction risk quantified by Criterion E. Because IUCN classification can be triggered when any one of several criteria is met, this kind of systematic overestimation creates the possibility of false-positive threat classification: species that are not in fact threatened may still be ranked as threatened. If that happens, conservation effort cannot be directed fully towards the taxa that are actually facing higher extinction risk.

This issue is not unique to Japanese eel. The same logic is likely to apply more broadly to marine species with large population sizes.

This study presents a framework for estimating extinction risk that can be used directly in conservation assessment by integrating mathematical models of population dynamics with the statistical theory of confidence intervals. The core w-z method is also available in the R package extr on CRAN.

I hope this paper will be useful not only to researchers applying IUCN Criterion E, but also to anyone interested in how uncertainty should be handled in conservation decision-making, and in how different Red List criteria relate to one another.

Read the full paper here.

Post edited by Sthandiwe Nomthandazo Kanyile.